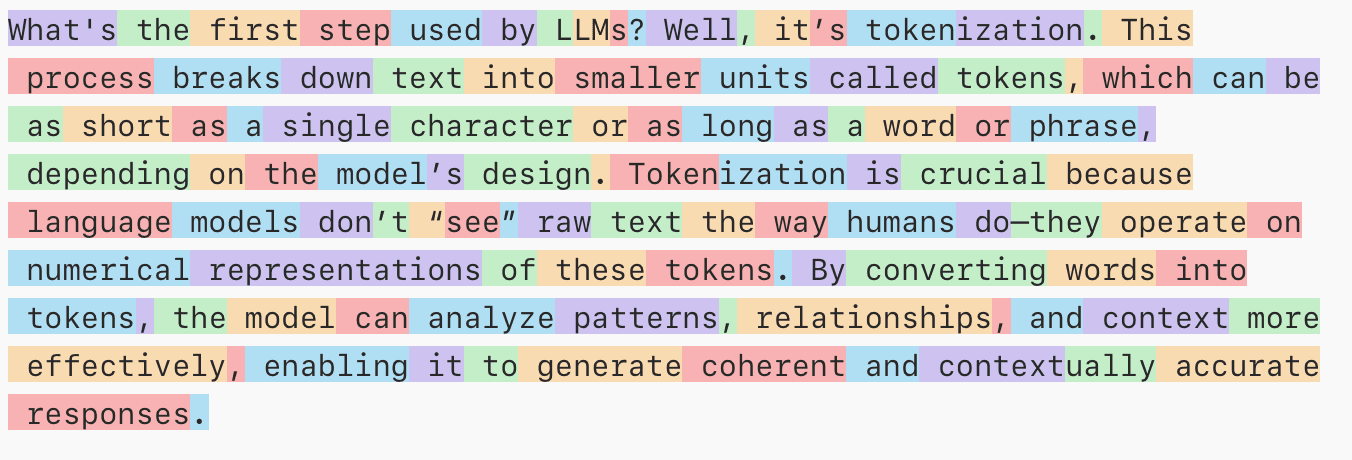

What is Tokenization in GPT?

When we were just kids in school, we started learning about first language by learning Alphabets, then words, then sentences. Whenever we have a conversation with someone, our mind automatically breaks the sentence into individual words and try to analyze what each word means. Isn’t this cool right?

What if I told you, this is the same mechanism behind all the AI software that we know and commonly use in our everyday life. So, let’s dive deep into what this process of tokenization is.

Tokenization

Machines unlike humans cannot understand the entire text given to it as an Input as a whole. Machines are more suitable to work and operate with numbers. So, to make the process of working with our human language a lot easier, the query is first tokenized.

Tokenization is a process of breaking down a given query into individual words known as tokens and then converting those tokens into numbers.

Let’s assume we have a sentence like “Hello How are you?”. So this sentence will be broken into individual words known as tokens first.

Tokens : [“Hello“, “ “, “How“, “ “, “are“, “ “, “you“, “?“ , “EOS“]

Here:

Spaces are tokens as well.

EOS stands for End of Sequence, marking the end of sentence.

Converting to Numbers

Since, Machines can’t directly work with the tokens, then each of the token is further converted into a hardcoded number. It is a number that have been given to the model during the training phase.

Hello → "342"

How → "542"

are → "2412"

you → "1412"

? → "112"

Now after this process the token list looks something like

Tokens: [342, 542, 2412, 1412, 112, EOS]

Special Characters

Now you might be wondering that in the original tokens array before converting to number, there were also Spaces as tokens. Also what’s that EOS at the end of the array? Well these are Special Characters or Special Tokens.

Spaces → Spaces are part of the actual text and hence there could either be a special token associated for spaces or they can be included with the other tokens as well.

End of Sequence (EOS) → EOS tells the tokenizer that the query that we have given as an input is now complete or in other words this marks the ending of a sentence, just like full stop in english language.

There are a lot of Special Tokens and different models and tokenizers might have less or more special characters during the tokenization process.

How GPT use tokens?

Well GPT use tokens to help predict the next tokens based on the token it has analyzed so far. It keeps predicting the next tokens until End of Sequence (EOS) is retrieved by the GPT.

User : "The Boy is"

GPT Predicts : "running"

Sequence: "The Boy is running"

GPT Predicts : "fast"

GPT Predicts : "in"

GPT Predicts : "the"

GPT Predicts: "Market"

At last: "The Boy is running fast in the Market " + [EOS]

In short: Tokenization is how AI takes our natural language, slices it into manageable parts, and turns it into numbers hence making it possible for machines to “understand” and respond to us.