Explaining Vector Embeddings to Mom

Hey Mom, today I want to tell you about something called vector embeddings. You might think that this is some rocket science concept from the world of AI but In fact, it’s a lot like things you already understand from everyday life.

Imagine a Giant Map of Everything

Think of all the words, pictures, or songs in the world as points on a giant map. On this map, things that are similar are placed closer together, and things that are very different are far apart.

For example:

“Apple” and “Banana” are close together because they are both fruits.

“Apple” and “Car” are far apart because they are very different.

That map is what computers use when they turn things into vectors which are just lists of numbers that help the computer know where each thing belongs on the map.

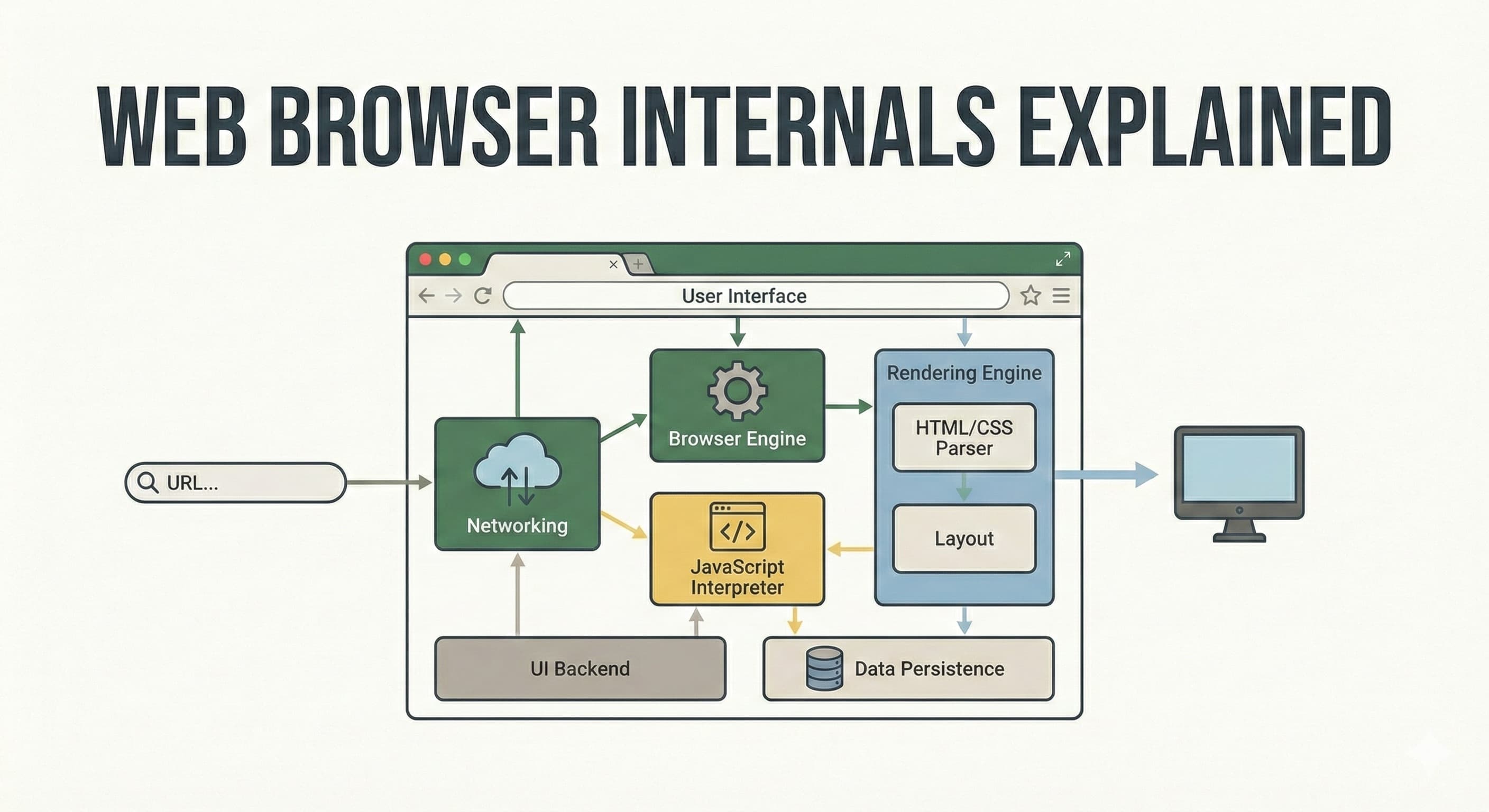

In the image above, we can clearly see that whenever I say "France," the first word that usually comes to mind is "Eiffel Tower." Mom, have you ever wondered why? It's because our minds are good at forming relationships between different words. So, even though France is a country and not a monument, and on the vector map they are kept separate, this is the beauty of vector embeddings. With the help of these maps, we can still infer that there's a connection between "France" and "Eiffel Tower."

Numbers That Capture Meaning

A vector is basically a string of numbers like [0.2, 0.5, 0.8]. At first glance, it looks random but these numbers encode meaning.

For example:

Words that mean similar things will have vectors that point in similar directions.

Pictures of cats will have vectors that are closer to each other than pictures of dogs.

The computer doesn’t “understand” like we do, it just notices patterns in the numbers.

Why This Is Useful

When we give a query to a Transformer, It becomes so much easier for a GPT to understand the context of query and how each token are related to one another even if one surface they don’t look the same. With the tokens placed on the vector maps, it is easier to find the relationship between different words hence enabling GPT to provide better outputs as a response.

A Simple Analogy

Imagine you have a box of chocolates. Each chocolate has a tag: flavor, sweetness, color. If you want a chocolate that is similar to your favorite, you just look for chocolates whose tags are close to your favorite chocolate. That’s what vector embeddings do they give each item a “tag” that’s really a bunch of numbers, so the computer can find similar things easily.

Wrapping It Up

So, Mom, vector embeddings are basically a way to turn messy, complicated things; words, pictures, music into numbers so computers can understand similarity. Think of it as a giant invisible map where everything has a spot, and things that are alike naturally cluster together.